Doručení

Nákupní rádce

Nehodí se? Vůbec nevadí! U nás můžete do 30 dní vrátit

Dárkový poukaz

V libovolné hodnotě

Dárkový poukaz

V libovolné hodnotě

S dárkovým poukazem nešlápnete vedle. Obdarovaný si za dárkový poukaz může vybrat cokoliv z naší nabídky.

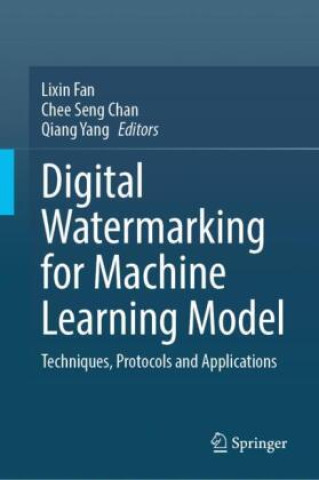

Digital Watermarking for Machine Learning Model

Techniques, Protocols and Applications

Angličtina

Angličtina

368 b

368 b

30 dní na vrácení zboží

Zákazníci také koupili

Machine learning (ML) models, especially large pretrained deep learning (DL) models, are of high economic value and must be properly protected with regard to intellectual property rights (IPR). Model watermarking methods are proposed to embed watermarks into the target model, so that, in the event it is stolen, the model's owner can extract the pre-defined watermarks to assert ownership. Model watermarking methods adopt frequently used techniques like backdoor training, multi-task learning, decision boundary analysis etc. to generate secret conditions that constitute model watermarks or fingerprints only known to model owners. These methods have little or no effect on model performance, which makes them applicable to a wide variety of contexts. In terms of robustness, embedded watermarks must be robustly detectable against varying adversarial attacks that attempt to remove the watermarks. The efficacy of model watermarking methods is showcased in diverse applications including image classification, image generation, image captions, natural language processing and reinforcement learning.

Informace o knize

Angličtina

Angličtina

Kategorie

Darujte tuto knihu ještě dnes

Je to snadné

1 Přidejte knihu do košíku a zvolte doručit jako dárek 2 Obratem vám zašleme poukaz 3 Kniha dorazí na adresu obdarovanéhoMohlo by vás také zajímat

Ahoj! Jsem Libroamiko, tvůj knižní rádce.

Jak ti můžu pomoct?

Kontakt

Kontakt Jak nakupovat

Jak nakupovat